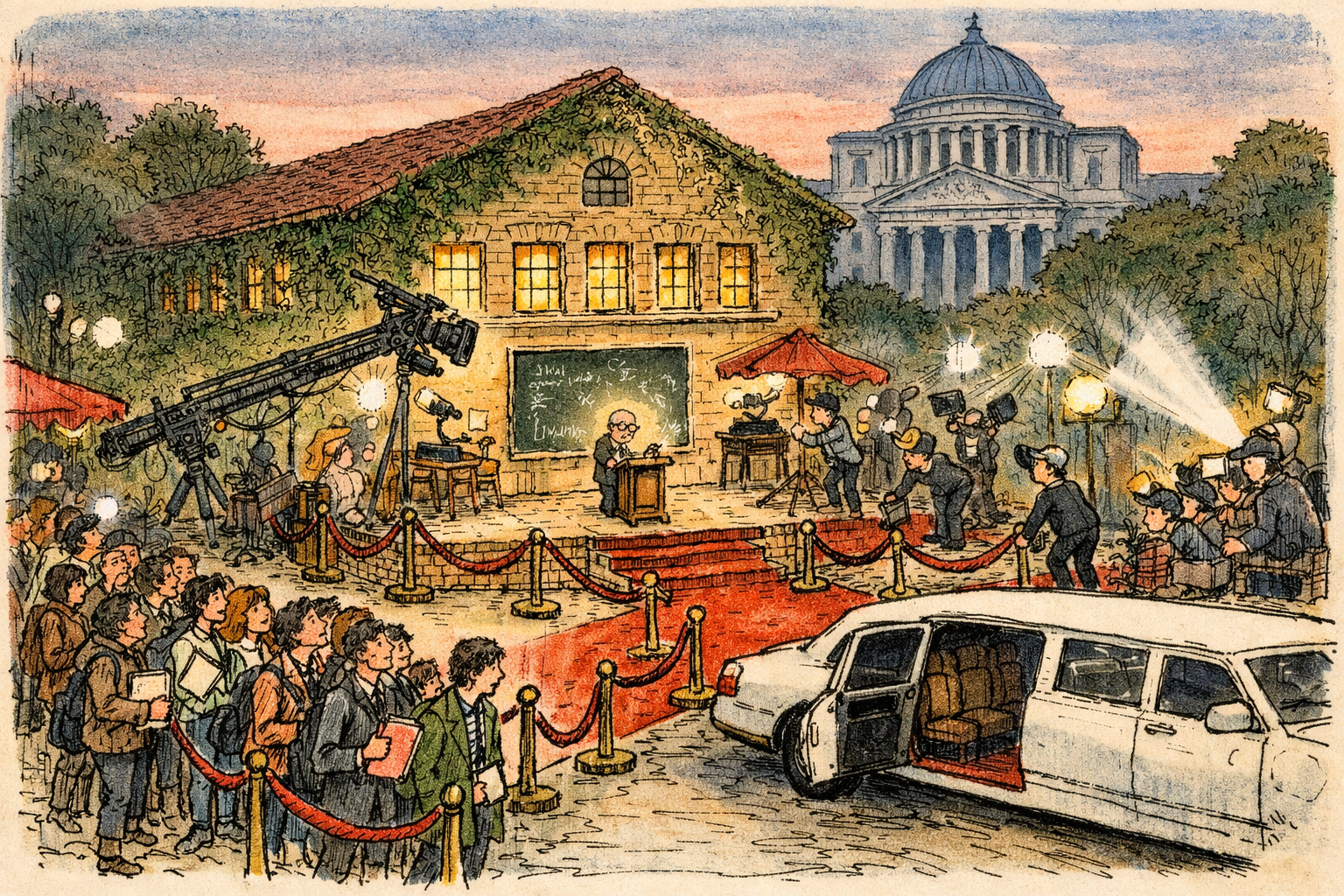

John Ternus’s AI Moment: Apple’s Hardware Heir Must Build Trust, Not Just Devices

On September 1, 2026 Tim Cook moves to executive chairman and John Ternus becomes CEO. Apple’s near-term fortunes will be judged on whether it can ship a mass-market, trusted AI product—not just a shinier iPhone. Ternus’s hardware instincts powered Apple’s past success; they will be a liability if he treats AI as another component to perfect rather than a platform that must be rebuilt around software, models, data partnerships, and public trust.