Thursday, April 23, 2026

03 signals · 06:00 UTC

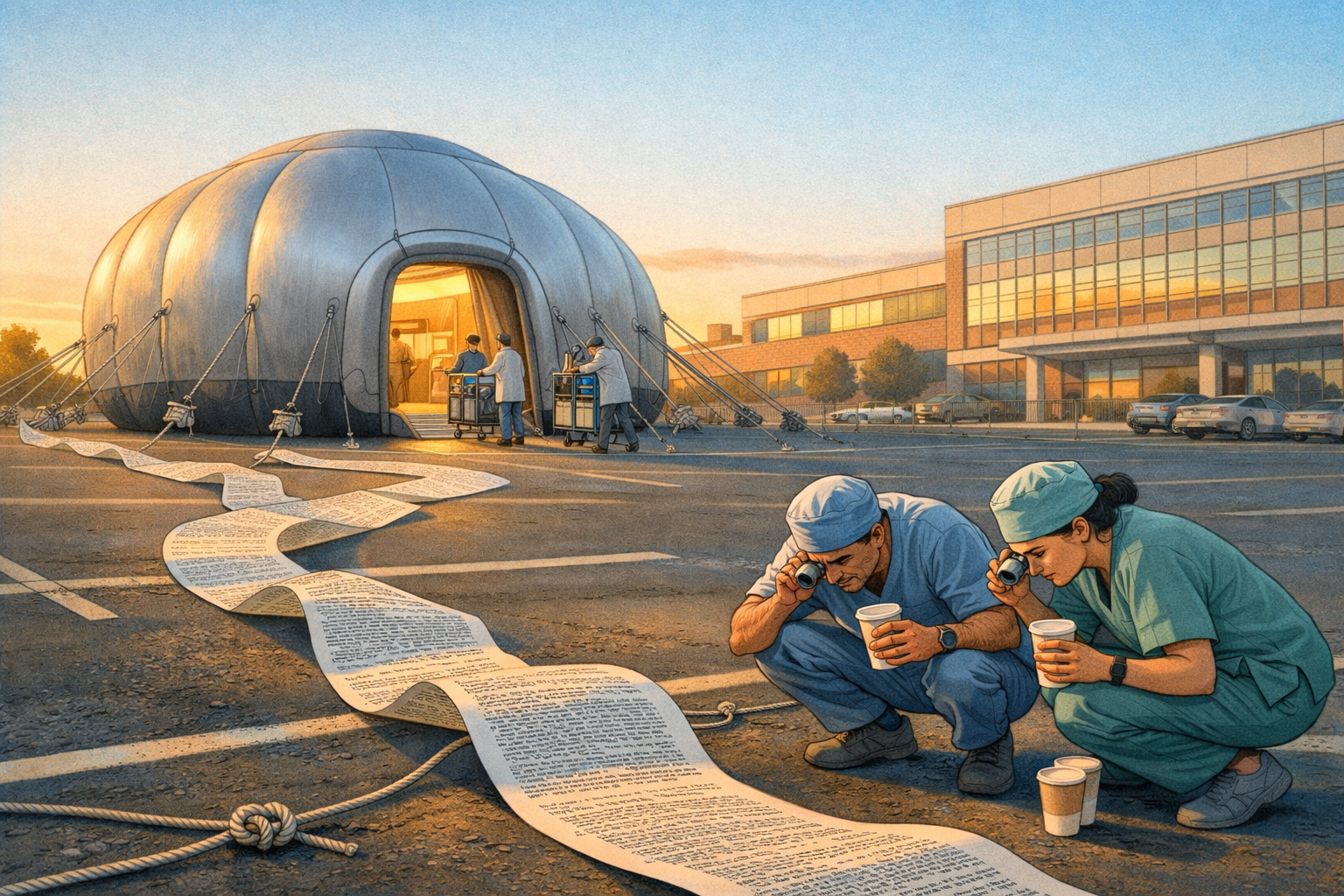

Benchmark Wins Don't Make ChatGPT Clinically Safe

Benchmark Wins Don't Make ChatGPT Clinically Safe

OpenAI's claim that ChatGPT‑5.4 outperformed specialty‑matched physicians is a benchmark victory, not proof of safe, generalizable clinical competence. Treat it as an invitation to rigorous, context‑specific validation, not clearance to rely on the model in practice.

Read story →Black‑box image generation vs. developer hunger for provenance

Dev and researcher frustration mixed with curiosity. People are excited about the quality jump in 'Images 2.0' but annoyed that the system acts like a black box: is the prompt rewritten? which internal model generated what? Folks are digging in network inspectors and calling for the return of visible intermediate prompts (DALL·E 3-era transparency). The tone is pragmatic impatience — this matters for debugging, reproducibility, and attribution.

Image models getting 'anchored' and memetic quirks (the 'squashmaxxed' worry)

Playful amazement plus a worrying undercurrent. People are sharing delightful, bizarre outputs (paintings as cakes, galleries of shoes matched to famous works) and joking about models getting stuck on a motif — Emollick's 'squashmaxxed' line captures anxiety that strong signal in training or prompts can make models overproduce a narrow aesthetic, degrading variety. The mood swings between amused demo-sharing and serious notes about robustness and mode collapse.

Platform governance and model lifecycle anxiety

Developers are on edge about breaking changes, deprecations, and opaque policy moves. Simon's correction about a supposed deprecation and reminders of prior embedding model shutdowns fuel a broader distrust: teams worry features they rely on will vanish, and that announcements/rollouts are messy. Sentiment is wariness — people are monitoring for regressions and scrambling to adapt code and expectations.