Friday, April 24, 2026

02 signals · 06:00 UTC

While AI Parties, Amazon Rewires the Stack

While AI Parties, Amazon Rewires the Stack

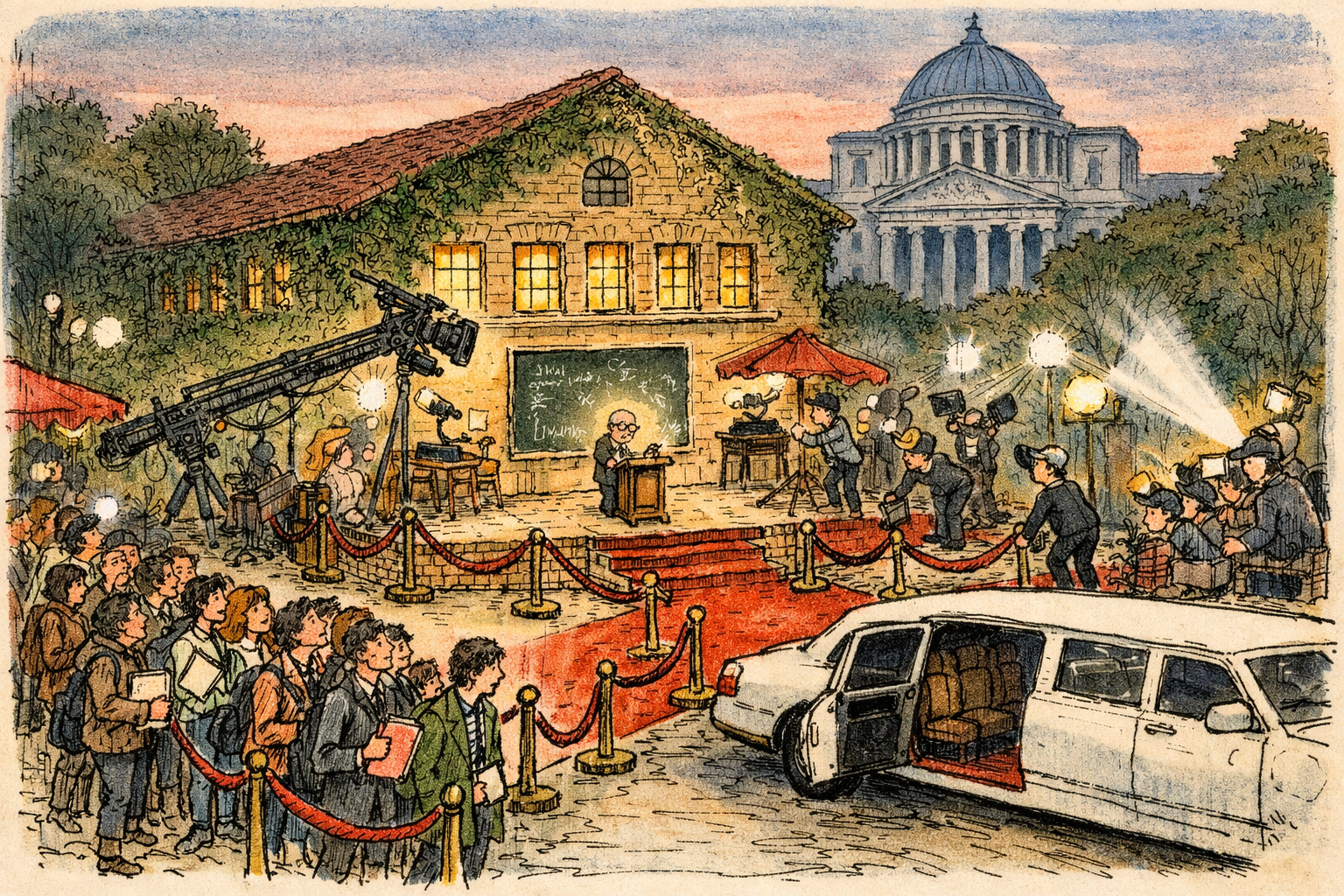

Starry lectures and celebrity demos dominate headlines, but Amazon has quietly turned capital and contracts into control. Equity stakes plus multi‑year, multi‑gigawatt compute commitments are positioning AWS as the operating layer where the next generation of large models will be designed, trained and commercialized. Regulators and reporters seeking leverage should follow chip orders and procurement schedules, not the showbiz.

Read story →DeepSeek v4 release — cheap, open weights, and the credibility question

The community is buzzing about DeepSeek v4 because it ships open weights and aggressive pricing, which many see as strategically huge (lowering barriers and forcing competitors to respond). Enthusiasm is tempered by healthy skepticism: folks want to know whether benchmark parity means real-world parity, and some warn that open models shift the battleground to engineering, quantization, and deployment rather than raw benchmark numbers.

GPT-5.5 reactions — impressed with capabilities, annoyed by jaggedness and hype

People who tested GPT-5.5 report real capability gains (especially the Pro variant) and concrete use-case wins — but the mood is mixed: admiration for the step-up sits alongside frustration about rough edges, inconsistency, and the reflex to immediately crown a single 'winner.' Many voices are nudging the community to avoid frantic provider-switching and to focus on what actually integrates into workflows.

Image models behaving weirdly — funny artifacts, stylistic divergence, and quality comparisons

Image outputs are a favorite low-effort battleground: folks are trading amusing and worrying examples that expose stylistic shifts, hallucinated details, or surprising artifacts. The tone is playful but probing — people use side-by-sides to call out where models diverge and to ask whether the new versions are better, just different, or brittle in edge cases.