Saturday, April 25, 2026

00 signals · 06:00 UTC

Benchmarks, metrics and orchestration for multi-agent systems

People are converging on a practical frustration: building agents is only half the problem — getting multiple agents to work together and measuring that work is the other half, and our current metrics (and intuitions like “intelligence per token”) feel inadequate. The conversation mixes urgency (this is the next economic frontier) with skepticism about simplistic metrics and a demand for better benchmarks that capture coordination, cost, and real-world utility.

Model churn, regressions and fickle loyalties

The timeline of model releases is creating a whipsaw: people migrate between Claude, GPT-5.5 and other models based on perceived quality, while others are loudly frustrated when new releases feel worse (opus 4.7). Sentiment is simultaneously celebratory (when a model returns great results) and wary — users expect rapid iteration but are increasingly vocal about regressions and opaque changes.

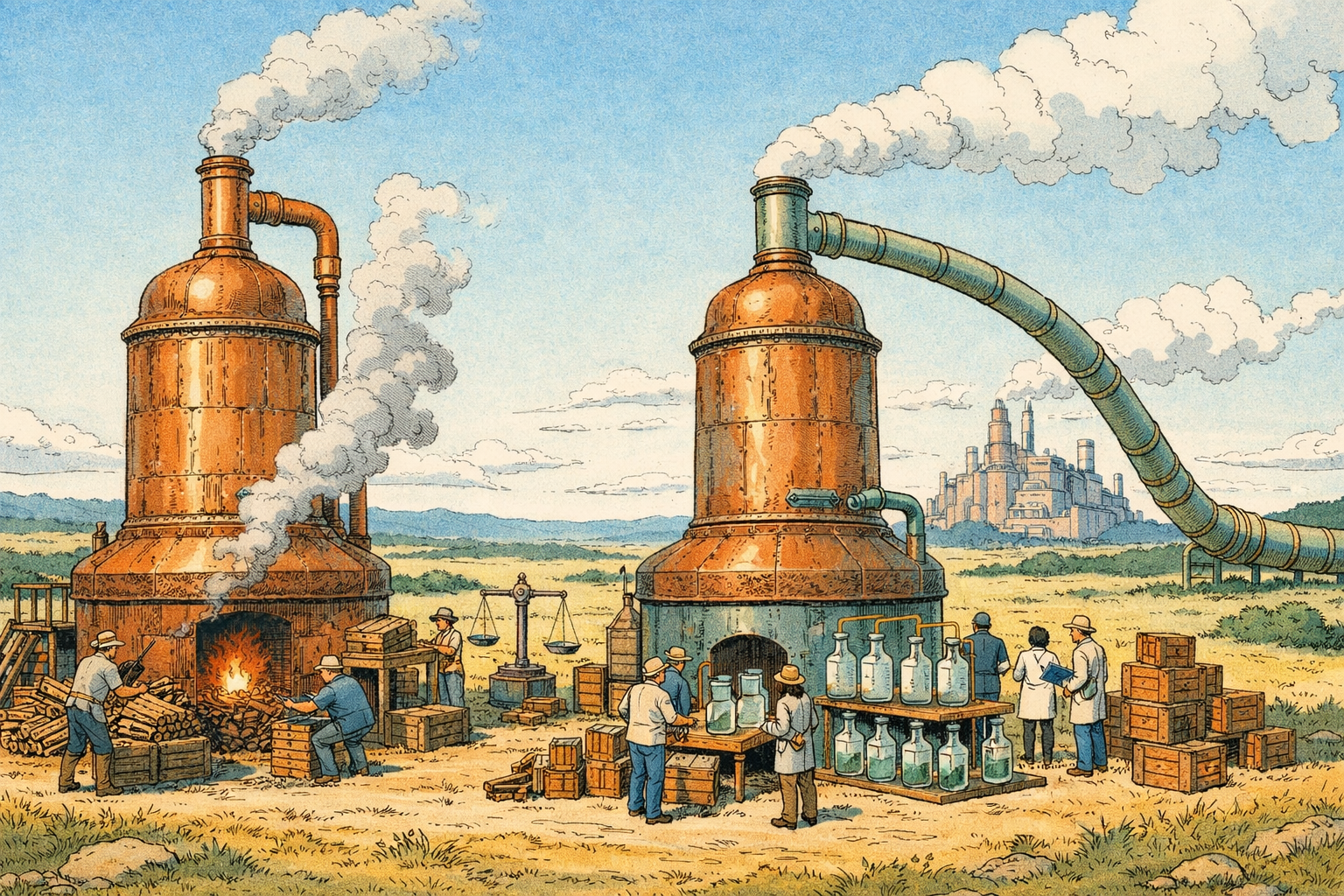

Distillation, open research and the economics of openness

A recurring, pragmatic thread: open research frequently depends on distilling larger models into smaller, cheaper variants — and that has shaped what 'open' labs can actually build. Folks want more systematic study (and funding) to compare post-training approaches with/without distillation. The vibe is constructive — pointing out a structural truth and asking for better public evidence to guide policy and funding.